Day 113: Backend Performance

What We Build Today

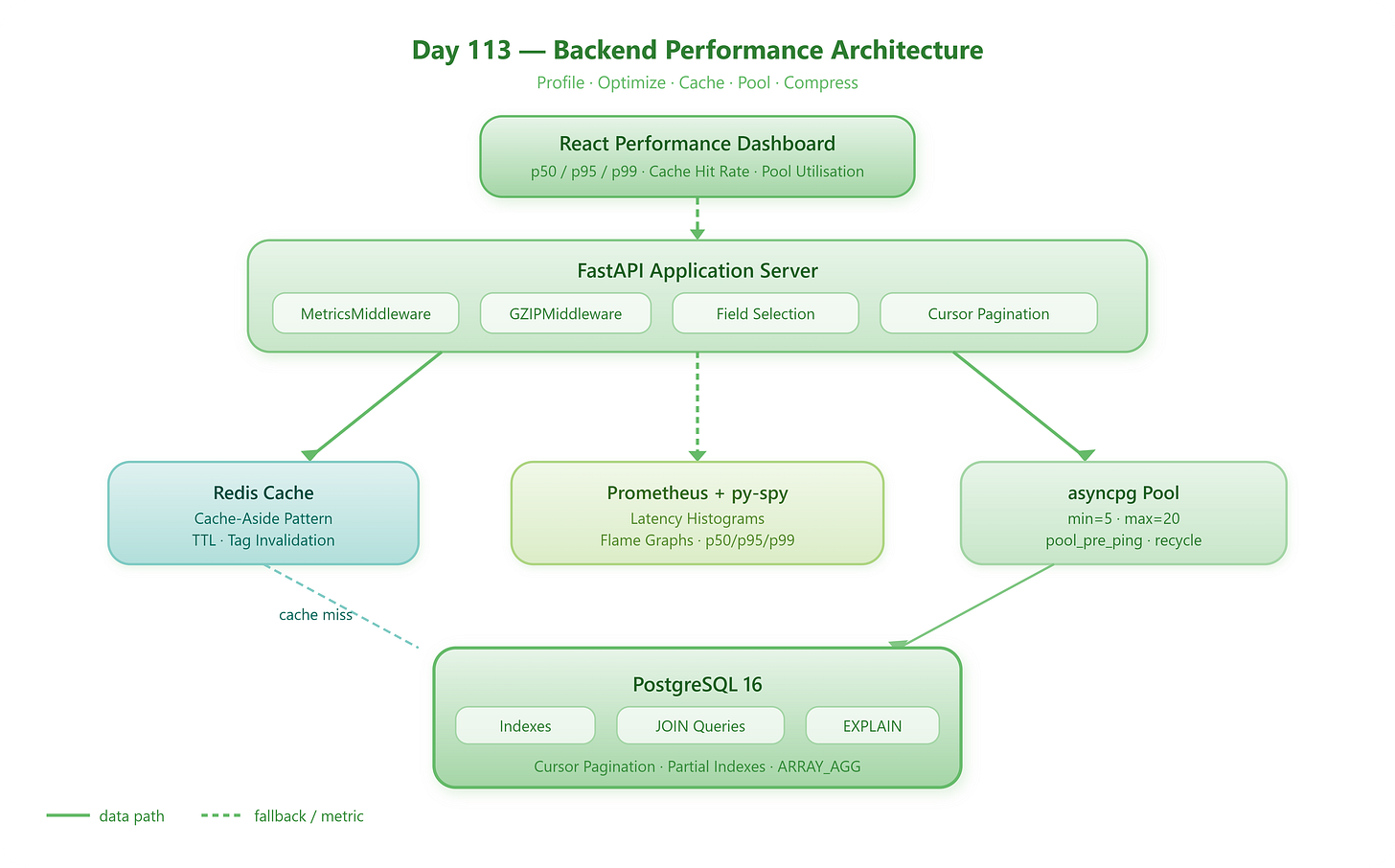

By the end of this lesson you will have a fully instrumented FastAPI backend that:

Profiles every request with real latency histograms using

py-spy+ PrometheusRewrites slow N+1 database queries into lean JOIN-based queries with

EXPLAIN ANALYZELayers a Redis cache with intelligent TTL and cache-aside patterns

Pools PostgreSQL connections via

asyncpg+ SQLAlchemy asyncCompresses and shapes API responses with field selection, pagination cursors, and

msgpackShips a live Performance Dashboard in React showing p50/p95/p99 latencies, cache hit rates, and pool utilisation — styled after Datadog’s APM UI

Prerequisites: Python 3.12+, Node.js 18+, Docker Desktop running, and the build.sh / stop.sh scripts from the project implementation package.

Part 1 — The Concepts

1. Why Backend Performance Is Non-Negotiable

Every 100 ms of additional latency costs Amazon approximately 1% of revenue. That is not a myth — it is a documented internal benchmark that became an industry reference point. At the infrastructure management layer we are building, a slow API means slow dashboards, slow alert acknowledgement, and engineers staring at spinners when incidents are live. Performance is a feature, not an afterthought.